How Can Enterprises Build Secure,Governed Agentic AI Pipelines at Scale?

Enterprises have spent the last decade learning how to govern data, applications, and users. They are now confronted with a new reality: systems that do not merely process information but act on it. Agentic AI systems read documents, verify identities, access enterprise applications, trigger workflows, and make decisions that carry operational and regulatory consequences.

Yet, recent research shows 95% of executives report AI-related mishaps, with only 2% of organisations meeting responsible AI standards, highlighting a stark governance gap. At this point, AI is no longer a tool that produces outputs. It becomes an operational participant inside the organisation’s control environment.

To safely deploy such systems at scale, enterprises must design agentic AI pipelines where document authenticity verification, AI identity verification, and AI-based compliance are engineered directly into the pipeline architecture. This is not an afterthought for compliance teams. Instead, it is now a foundational concern for architects, security leaders, and technology strategists.

This blog is an exploration of how enterprises can design agentic AI pipelines that embed governance, identity assurance, document authenticity validation, and policy enforcement directly into their architecture to enable compliant, scalable autonomous AI.

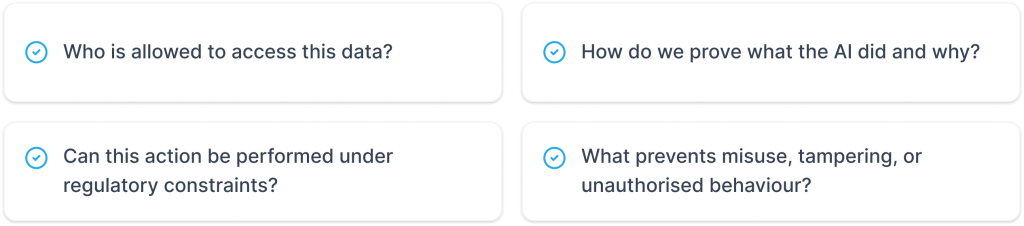

Agentic AI Pipelines as Enterprise Operational Infrastructure

In traditional AI workflows, inference comes through when an input is processed, an output is produced, and a human agent decides what to do next. Agentic systems, in contrast, remove the human pause and make the output actionable. This shift turns the pipeline into operational infrastructure. It must now answer questions typically reserved for enterprise systems, such as:

This is where agentic AI governance becomes essential. The pipeline must operate as a controlled environment in which every step from data ingestion to final action is continuously governed under clear AI compliance and governance controls.

Stage 1 — Ingestion: Document Authenticity Verification as the First Gate

- Structural and metadata validation

- Tamper detection and forgery analysis

- Verification against issuing authorities or trusted registries

- Timestamp and provenance checks

Stage 2 — Identity Gate: AI Identity Verification Before System Access

- User credentials are validated against enterprise identity systems

- Identity documents are cross-checked with biometric or behavioural signals

- Access rights align with role and regulatory requirements

- Suspicious patterns or anomalies are detected in real time

Stage 3 — Policy Enforcement: AI for Real-Time Regulatory Compliance

Data Residency Rules

Approval Workflows

Encryption Standards

Risk Thresholds

Once inputs are trusted and identities are verified, the pipeline moves to the decision layer. This is where policy stops being documentation and starts operating as code. Here, regulatory compliance is not monitored after the fact. It is enforced in real-time through defined policy enforcement points. Access to sensitive datasets is automatically restricted. Actions that could breach data residency rules are prevented. High-risk operations are paused for additional approvals. Non-compliant paths are blocked before they can be executed.

This is also where agentic AI governance becomes practical and measurable. The system does not rely on the agent to “do the right thing”. The pipeline is designed so that the wrong thing simply cannot happen.

Stage 4 — Decision and Action: AI-Based Compliance Embedded in Behaviour

- Actions remain within the authorised scope

- Decisions are recorded with contextual metadata

- The reasoning path is preserved for traceability

- No action bypasses governance checkpoints

Stage 5 — Observability: Proving AI Compliance and Governance

In agentic AI systems, governance cannot rely on policy alone. It must be observable, traceable, and defensible. An effective observability layer records the full decision trail across the pipeline, which documents passed authenticity checks, which identities were verified, which compliance policies were invoked, and the rationale behind every decision. It also captures the actions executed and the entity responsible for them.

This creates a reliable audit trail that regulators, auditors, and risk teams can interrogate with confidence. Governance, therefore, moves from being a stated intent to a demonstrable, evidence-backed capability.

Stage 6 — Scaling Agentic AI Pipelines Without Scaling Risk

- Document authenticity verification

- AI-powered ID verification

- Policy enforcement

- Audit logging

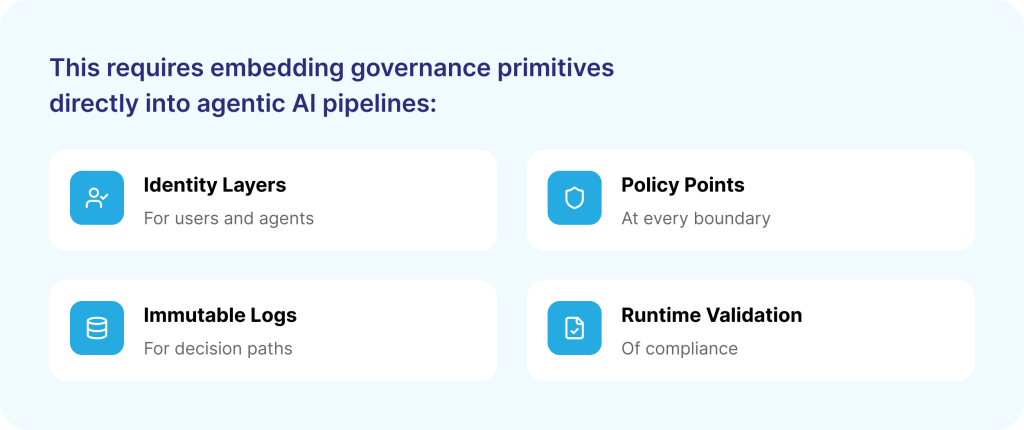

Engineering Agentic AI Governance into the Architecture

A common mistake is treating governance as documentation. In agentic systems, governance must be engineered.

The practical outcome is straightforward: no agent decision occurs without passing through governance checkpoints. The pipeline itself becomes the enforcement mechanism for AI compliance and governance.

The Role of AI Governance Services in Enterprise Implementation

Building structured, compliant AI pipelines is not just a technical exercise, as it demands specialised governance thinking. That is where AI governance services make a real difference.

They help enterprises put the right controls in place from the start: verifying documents as they enter the system, strengthening access with AI-led identity checks, embedding regulatory compliance directly into policy layers, and creating full observability across the pipeline.

In essence, these services turn regulatory intent into a working architecture that teams can trust every day.

SquareOne: Your Go-To Tech Partner in Saudi Arabia

As organisations adopt agentic AI, the real priority shifts from intelligence to trust and control. SquareOne enables enterprises in Saudi Arabia to build AI pipelines where governance is embedded from the start. From document authenticity and AI-led identity checks to policy-driven compliance at decision points, every layer is secured. End-to-end observability ensures every action, decision, and access point is traceable and auditable. The result is agentic AI that is intelligent, compliant, and secure by design from day one.

Conclusion

The maturity of an agentic system is not measured by the sophistication of its model but by the strength of the pipeline that governs how it acts.

When agentic AI governance, document authenticity verification, AI identity verification, and AI-based compliance are engineered into agentic AI pipelines, enterprises create systems that can operate autonomously without creating unmanaged risk.

At enterprise scale, trust is not built through capability alone. It is built through control, accountability, and demonstrable governance, all of which reside in the pipeline.